This work introduces a comprehensive dataset designed to advance AI-driven surgical robotics and medical imaging. By capturing detailed 𝗮𝗻𝗮𝘁𝗼𝗺𝗶𝗰𝗮𝗹 𝗹𝗮𝗻𝗱𝗺𝗮𝗿𝗸𝘀 𝗼𝗳 𝘁𝗵𝗲 𝘂𝗽𝗽𝗲𝗿 𝗮𝗶𝗿𝘄𝗮𝘆, we aim to support safer, more accurate 𝗯𝗿𝗼𝗻𝗰𝗵𝗼𝘀𝗰𝗼𝗽𝘆 𝗮𝗻𝗱 𝗶𝗻𝘁𝘂𝗯𝗮𝘁𝗶𝗼𝗻 procedures — paving the way for improved patient outcomes and robust benchmarking in clinical AI.

🔑 𝗛𝗶𝗴𝗵𝗹𝗶𝗴𝗵𝘁𝘀:

– First-of-its-kind dataset focused on airway anatomical landmarks

– Enables benchmarking for automated navigation and intubation tasks

– Openly available to foster collaboration across robotics, AI, and healthcare communities

We hope this resource will accelerate innovation in 𝗲𝗺𝗯𝗼𝗱𝗶𝗲𝗱 𝗶𝗻𝘁𝗲𝗹𝗹𝗶𝗴𝗲𝗻𝗰𝗲 𝗳𝗼𝗿 𝗵𝗲𝗮𝗹𝘁𝗵𝗰𝗮𝗿𝗲 and inspire new interdisciplinary collaborations.

👉 Read the full paper: https://rdcu.be/eS0d5

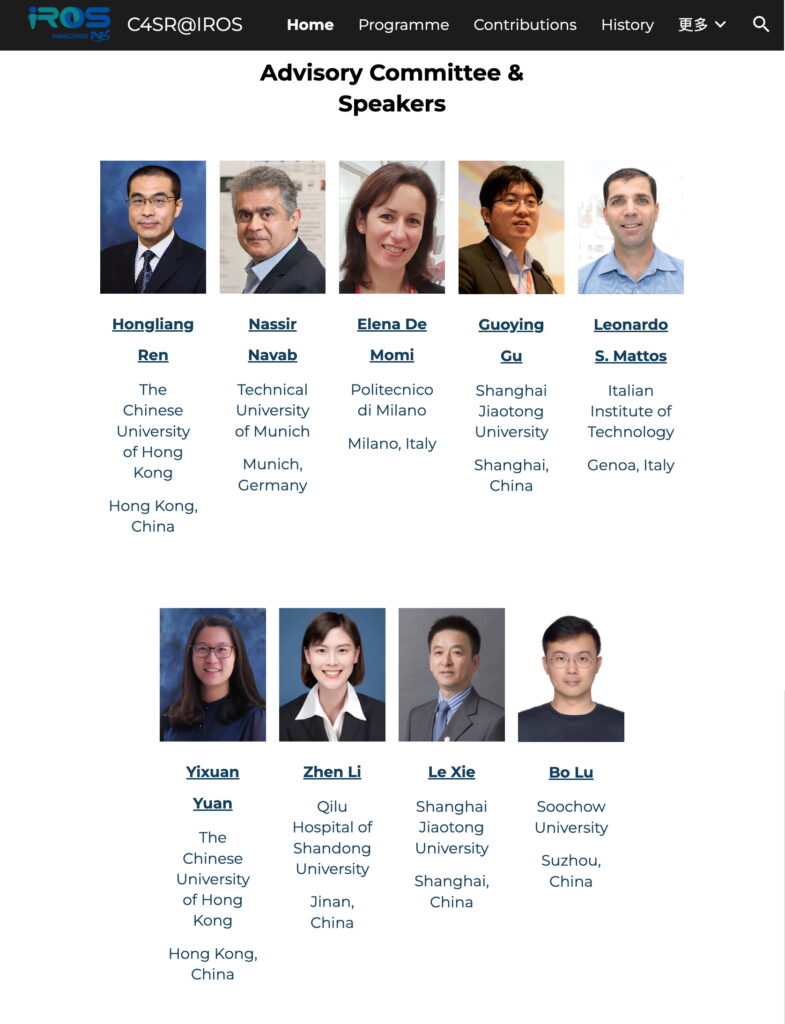

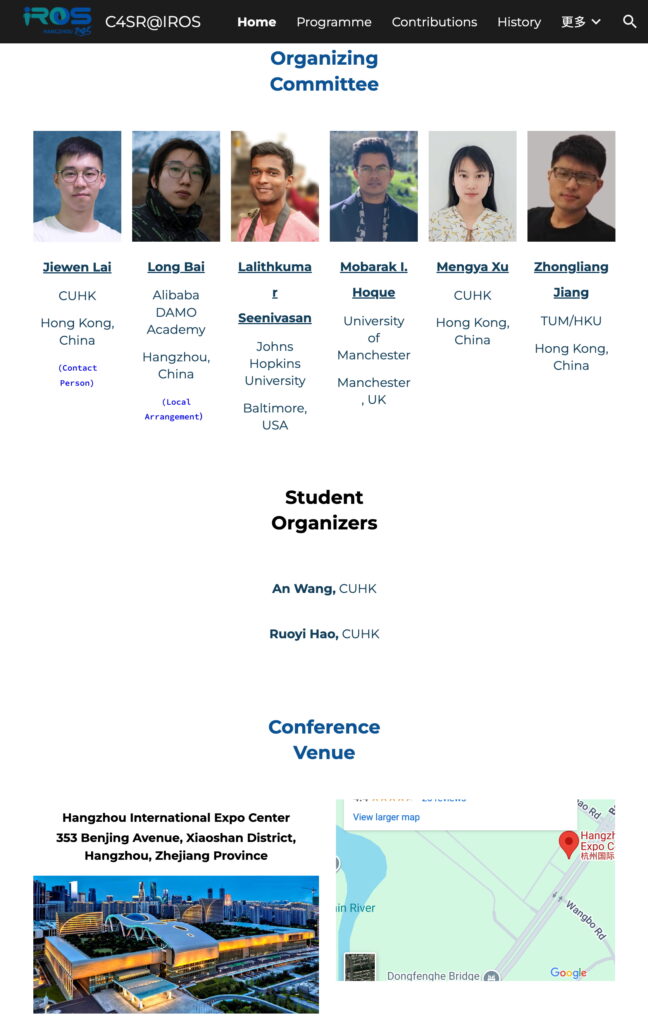

Grateful to all co-authors and collaborators from The Chinese University of Hong Kong (Ruoyi Hao, Zhiqing Tang, Catherine Po Ling Chan, Jason Ying Kuen Chan, Prof. Hongliang Ren), Hubei University of Technology (Zhang Yang), Huazhong University of Science and Technology (Yang Zhou), National University of Singapore (Lalithkumar Seenivasan), and Singapore General Hospital (Shuhui

Xu, Neville Wei Yang Teo, Kaijun Tay, Vanessa Yee Jueen Tan, Jiun Fong Thong, Kimberley Liqin Kiong, Shaun Loh, Song Tar Toh, and Prof. Chwee Ming Lim), for making this possible. Excited to see how others build upon this foundation!